From Brain-Computer Interfaces (BCIs) like Elon Musk’s Neuralink, to AI assisting “locked-in” people with writing using their minds enabled by smart brain medical devices, the convergence of neuroscience, medical devices, artificial intelligence (AI), and machine learning has paved the way for accelerating our understanding of the human brain, opening up new frontiers in diagnostics, therapeutic interventions, and the development of advanced medical devices. The emergence of Cinematic Mindscapes: High-quality Video Reconstruction from Brain Activity, represents a transition from science fiction to reality, and provides insights into the complexities of human cognition and perception. Does anyone remember that movie “The Cell?”

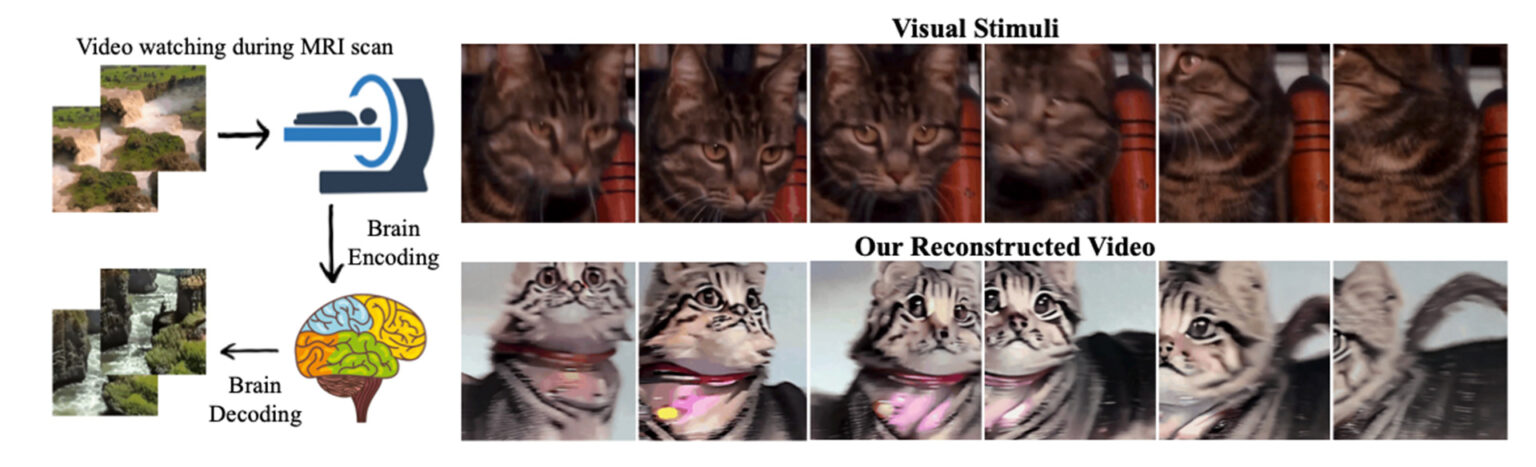

Researchers from Singapore and Hong Kong have developed an interdisciplinary approach to generating high-quality videos by decoding brain activity. Known as MinD-Video, the process relies on an fMRI encoder and an augmented stable diffusion model. The fMRI encoder learns generic visual features through large-scale unsupervised learning, while the stable diffusion model is fine-tuned to generate high-quality videos based on the encoded brain activity.

Queue the Record Scratch…Geekery Explained

If you are asking yourself “What the hell is an fMRI, no less its encoder? And augmented stable diffusion…what is this voodoo of which you speak?” Well, you are not alone in asking these questions.

Image Source: arXiv:2305.11675v1 [cs.CV] 19 May 2023

Functional Magnetic Resonance Imaging (fMRI) is widely used in neuroscience, psychology, diagnostics, and medicine to study brain function, diagnose neurological disorders, and develop treatments. The non-invasive imaging technique has enhanced our ability to explore the inner workings of the human mind by allowing us to see how the brain works, processes information, controls our actions, and regulate our emotions.

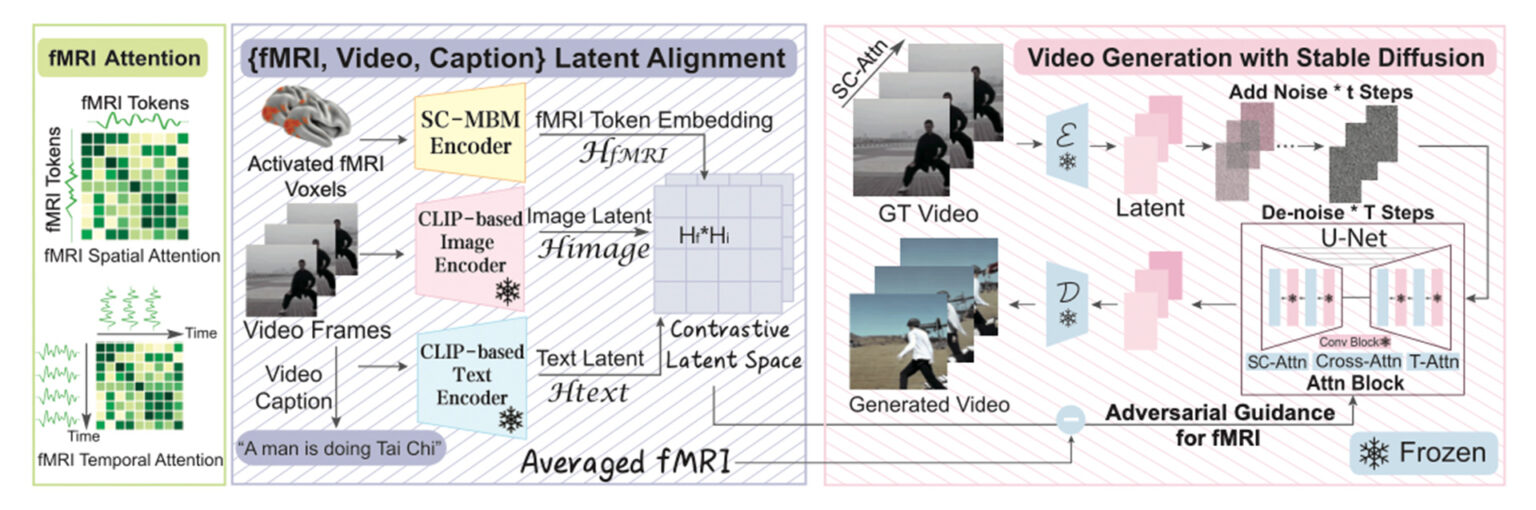

The fMRI encoder decodes or infers the mental states, thoughts, or intentions of an individual based on their brain activity patterns, establishing a relationship between the observed fMRI signals and the cognitive or psychological processes happening in the brain. The fMRI encoder takes complex patterns of brain activity captured by the fMRI scanner and processes the patterns to extract meaningful information, and then uses machine learning and computational algorithms to analyze and interpret data obtained from fMRI.

What Stable Diffusion is widely known for is AI image generation, similar to technologies like MidJourney or OpenAI’s DALL-E. A concept in machine learning, it uses advanced mathematics and algorithms to analyze complex patterns, make predictions, and generate images. However, “it can also be applied to other tasks such as inpainting, outpainting, generating image-to-image translations guided by a text prompt,”1 and can help solve problems in fields like anomaly detection and natural language processing.

Augmented Stable Diffusion enhances the capabilities of Stable Diffusion to output video reconstruction from brain activity. By incorporating deep learning and machine learning techniques, augmented Stable Diffusion surpasses traditional models and produces videos that resemble the actual visual experiences captured by the brain.

OK…Enough of the Geekery.

How It All Works

Image Source: arXiv:2305.11675v1 [cs.CV] 19 May 2023

The fMRI encoder leverages unsupervised learning, a machine learning process of discovering patterns and insights independently without explicit guidance or labels, to extract generic visual features, while the augmented Stable Diffusion model fine-tunes these features to generate high-quality videos. Rigorous testing and experiments using publicly available datasets have demonstrated the accuracy and fidelity of the reconstructed videos, exhibiting accurate motions and scene dynamics, and achieving an impressive accuracy rate of 85% in semantic metrics (measures or indicators used to evaluate the quality and effectiveness of natural language processing (NLP) systems or algorithms in understanding and generating human-like meaning in text).

When used with video, stable diffusion works by considering not only the spatial information in each frame, but also the temporal dynamics between consecutive frames. In video analysis, an augmented stable diffusion model factors in the motion, changes, and correlations between pixels across multiple frames.

Capturing dynamic visual experiences, like video, has been a challenge in neuroscience. fMRI, combined with AI and machine learning, has made significant advancements in extracting still images from brain activity. However, the temporal resolution of fMRI data acquisition, which captures brain activity every few seconds, lags behind the frame rate of videos, constricting the ability to reconstruct continuous visual experiences. Another barrier is that non-invasive technologies like fMRI have inherent limitations, such as susceptibility to noise and the high cost and time requirements associated with data collection.

Elon Musk, What are the Implications?

With its ability to learn representations from the fMRI encoder and align them with original neural activities, augmented Stable Diffusion is a game-changer in video reconstruction technology. By bridging the gap between neuroscience and these cutting-edge technologies, researchers are opening the doors for improved brain-computer interfaces (BCIs) that establish a direct connection between brain and external devices or computers, potentially unlocking the mysteries of the human mind, improving the lives of individuals with disabilities, enhancing human-computer interaction, and expanding our understanding of the brain function.

In the healthcare sector, pharmaceutical companies and research institutions could potentially utilize MinD-Video’s approach to develop more effective therapeutic interventions. Rehabilitation centers could leverage it to design personalized neurorehabilitation programs, and outside of healthcare, the entertainment industry could witness a transformation with immersive virtual reality experiences based on real-time brain activity.

There is a vast market potential for what we’ll for now term “Cinematic Mindscapes.” The demand for AI-enabled medical devices is projected to see exponential growth, reaching a value of $35,458.2 million by 2032, with a CAGR of 24.35%.2 Companies specializing in neuroimaging, AI, virtual reality, and brain-computer interfaces are likely to drive innovation, and the market for neuroscience research tools and devices may expand as researchers adopt this technology to explore the intricacies of the human brain.

The Future of “Cinematic Mindscapes” in Neuroscience

The technology raises concerns regarding privacy, ethics, and potential misuse. Safeguarding an individuals’ brain data and ensuring responsible use of this technology are paramount. Regulations and guidelines must be established to protect users’ rights and prevent any malicious application of the technology.

However, the impact of MinD-Video’s discovery is far-reaching, providing the opportunity to revolutionize the field of neuroscience by unlocking new avenues for studying the complexities of human perception and cognition, enhancing therapeutic interventions, personalized medicine, and neurorehabilitation treatments. Patients with neurological conditions could benefit from innovative therapies tailored to their individual brain activity patterns. As research progresses and applications evolve, “Cinematic Mindscapes” could hold the potential to transform our understanding of the human brain and positively impact neuroscientific healthcare.

Vault Bioventures, Inc., is a privately held life science consultancy comprised of former pharmaceutical, biotech, medical device and digital therapeutic executives. Metabolics is one of Vault’s core areas of expertise, interest, and research.

For more information on how we can help your organization design and differentiate your products, launch and sustain better brands, or transform and accelerate your business model, email us at: [email protected], or call us at +1 (855) 483-4838.

1 Wikipedia contributors. (2023, June 14). Stable Diffusion. In Wikipedia, The Free Encyclopedia. Retrieved 22:06, June 15, 2023, from https://en.wikipedia.org/w/index.php?title=Stable_Diffusion&oldid=1160112824

2 Research and Markets (2023) Global Artificial Intelligence/Machine Learning Medical Device Market 2023: Growing demand for wearable sensors to increase adoption of AI-enabled medical devices for home-based care, GlobeNewswire News Room. Available at: https://www.globenewswire.com/en/news-release/2023/04/27/2655979/28124/en/Global-Artificial-Intelligence-Machine-Learning-Medical-Device-Market-2023-Growing-Demand-for-Wearable-Sensors-to-Increase-Adoption-of-AI-Enabled-Medical-Devices-for-Home-Based-Car.html (Accessed: 15 June 2023).